Thirty days.

That’s how long it took for the two most powerful AI models on the planet to make the exact same bet: move into Microsoft Excel and fight for the finance workflow.

Claude Opus 4.6 shipped February 5 with an Excel integration, enterprise plug-ins for investment banking and FP&A and PwC as an implementation partner. GPT-5.4 followed March 5 with a ChatGPT for Excel add-in and live data connections to Moody’s, S&P Global, FactSet and LSEG. Same month. Same application. Different architectures. Different ecosystems. Both aimed squarely at your team.

Meanwhile, Gemini 3.1 Pro quietly expanded to a 2-million-token context window. DeepSeek V3.2 kept slashing prices to fractions of a penny per token. And Anthropic’s enterprise agent launch triggered what traders called the “SaaSpocalypse”: on February 3, a single trading day wiped out roughly $285 billion in market capitalization across software and services stocks. Thomson Reuters suffered its biggest single-day drop on record. Salesforce and ServiceNow each fell around 7 percent. Intuit and Equifax lost more than 10 percent a piece.

The market wasn’t betting on which model wins. It was pricing in a world where AI copilots embedded in existing tools start displacing the standalone software those companies sell.

For finance leaders, the question just changed. It’s no longer “should we adopt AI?” It’s “which model fits which workflow, and how do we govern the portfolio?”

What’s Actually in the Box

Both Excel copilots work from a side panel inside the workbook. You describe what you need in plain language. The tool builds, updates or debugs models using the formulas and structures already in the file. Both ask permission before making edits. Both link explanations to specific cells. Both run calculations natively in Excel rather than inside the model’s black box. That last part is what makes either tool auditable.

The differences are in the ecosystem around the spreadsheet.

OpenAI went deep on data. GPT-5.4’s Excel add-in connects directly to Moody’s, S&P Global, Dow Jones Factiva, LSEG, MSCI and FactSet. Pull credit metrics, filings, transcripts and market data without switching windows. The company also shipped reusable “Skills” for recurring finance tasks: earnings previews, DCF analysis, comparables and investment memo drafting. The positioning is clear. OpenAI is building the finance terminal inside a chatbot.

Anthropic went wide on workflow. Claude in Excel is one piece of a broader enterprise play that started with Claude for Financial Services in July 2025. In late February, Anthropic launched pre-built agents for financial analysis, equity research, private equity and wealth management. PwC, Accenture and Deloitte signed on as implementation partners. Last week, the company launched a marketplace where enterprise customers can purchase Claude-powered tools from partners like Snowflake and Harvey using existing committed spend. The positioning is equally clear. Anthropic is building the operating system for enterprise knowledge work.

Two architectures. Two strategies. The spreadsheet is just the entry point.

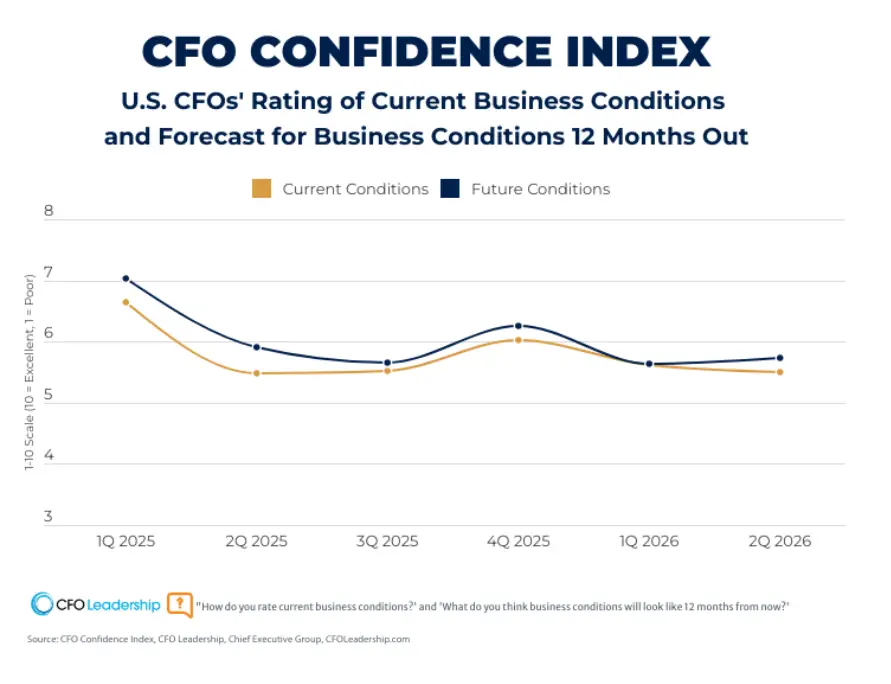

The 72/14 Problem

Here’s the stat that should reframe every model comparison you read this year. According to an RGP survey of 200 U.S. CFOs, 72 percent now use AI tools. Only 14 percent report clear, measurable ROI.

The barriers aren’t about model intelligence. Only 10 percent of surveyed CFOs fully trust their enterprise data. Eighty-six percent say legacy systems limit AI readiness. Sixty-eight percent cite skills gaps as their most significant challenge.

The velocity of competition benefits finance teams, but only if you evaluate based on workflow fit rather than headline benchmarks.

The organizations closing that gap share a pattern. They route specific models to specific workflows rather than standardizing on a single vendor. Brex automates 75 percent of expense transactions with Opus-based systems, hitting 94 percent policy compliance and saving roughly 169,000 hours per month. TELUS runs over 13,000 internal AI tools built on Claude, logging 500,000 hours saved and approximately $90 million in realized benefits. Lloyds Banking Group expects agentic AI to add roughly £100 million in value this year. BNY Mellon runs 117 agentic tools in production across operations and risk.

These aren’t “ChatGPT vs. Claude” stories. They’re architecture stories. The model is one variable. Integration fit, governance posture and data readiness are the other three.

The Governance Layer You Can’t Skip

Picking a model for finance isn’t just a capability question. It’s a vendor risk question. Two developments from the past few weeks make that concrete.

The same week Anthropic confirmed its ARR had surged to $19 billion, nearly doubling from late 2025, Defense Secretary Hegseth designated the company a supply-chain risk. That label has historically been reserved for foreign adversaries. The dispute centers on restrictions Anthropic pushed for around military AI use. For finance teams evaluating long-term vendor commitments, this introduces a variable no benchmark measures.

On the cost-efficiency end, DeepSeek V3.2 is tempting. At $0.55 per million tokens, it’s 10 to 25 times cheaper than the proprietary alternatives. But a September 2025 NIST evaluation found DeepSeek models complied with 94 percent of malicious jailbreaking attempts, compared to 8 percent for U.S. reference models. Agents built on DeepSeek were 12 times more likely to fall victim to hijacking attacks, where malicious instructions embedded in external documents redirect the agent from its task. For any workflow touching external data, that’s a serious governance problem.

And then there’s cost architecture. GPT-5.4 runs at $2.50/$15 per million tokens at standard rates, but the price doubles once input exceeds 272,000 tokens. Opus 4.6 starts at $5/$25 but hits a “200K cliff” where the entire request reprices to $10/$37.50 the instant you cross 200,000 input tokens. Both models punish sloppy context management. Architecture decisions in how you structure prompts and manage token budgets can swing costs by five figures annually.

Three Takeaways

- The competition is the story. Two frontier models shipped Excel copilots in the same 30-day window. Gemini is expanding context. DeepSeek is compressing cost. The velocity of competition benefits finance teams, but only if you evaluate based on workflow fit rather than headline benchmarks.

- The 14 percent who prove ROI match models to processes. They don’t standardize on one vendor. They route modeling to one tool, diligence to another, high-volume extraction to a third. Governance wraps around all of it.

- Governance is the real selection criterion. Data integrations, token economics, vendor risk posture and enterprise controls will determine which model sticks in your stack long after today’s benchmark scores are obsolete.